- AIPressRoom

- Posts

- MLPerf 3.1 provides giant language mannequin benchmarks for inference

MLPerf 3.1 provides giant language mannequin benchmarks for inference

Head over to our on-demand library to view classes from VB Rework 2023. Register Here

MLCommons is rising its suite of MLPerf AI benchmarks with the addition of testing for giant language fashions (LLMs) for inference and a brand new benchmark that measures efficiency of storage methods for machine studying (ML) workloads.

MLCommons is a vendor neutral, multi-stakeholder group that goals to supply a stage taking part in area for distributors to report on completely different facets of AI efficiency with the MLPerf set of benchmarks. The brand new MLPerf Inference 3.1 benchmarks launched immediately are the second main replace of the outcomes this 12 months, following the 3.0 results that got here out in April. The MLPerf 3.1 benchmarks embody a big set of information with greater than 13,500 efficiency outcomes.

Submitters embody: ASUSTeK, Azure, cTuning, Join Tech, Dell, Fujitsu, Giga Computing, Google, H3C, HPE, IEI, Intel, Intel-Habana-Labs, Krai, Lenovo, Moffett, Neural Magic, Nvidia, Nutanix, Oracle, Qualcomm, Quanta Cloud Expertise, SiMA, Supermicro, TTA and xFusion.

Continued efficiency enchancment

A typical theme throughout MLPerf benchmarks with every replace is the continued enchancment in efficiency for distributors — and the MLPerf 3.1 Inference outcomes observe that sample. Whereas there are a number of sorts of testing and configurations for the inference benchmarks, MLCommons founder and govt director David Kanter mentioned in a press briefing that many submitters improved their efficiency by 20% or extra over the three.0 benchmark.

Occasion

VB Rework 2023 On-Demand

Did you miss a session from VB Rework 2023? Register to entry the on-demand library for all of our featured classes.

Past continued efficiency positive aspects, MLPerf is constant to develop with the three.1 inference benchmarks.

“We’re evolving the benchmark suite to mirror what’s occurring,” he mentioned. “Our LLM benchmark is model new this quarter and actually displays the explosion of generative AI giant language fashions.”

What the brand new MLPerf Inference 3.1 LLM benchmarks are all about

This isn’t the primary time MLCommons has tried to benchmark LLM efficiency.

Again in June, the MLPerf 3.0 Training benchmarks added LLMs for the primary time. Coaching LLMs, nevertheless, is a really completely different job than working inference operations.

“One of many important variations is that for inference, the LLM is basically performing a generative job because it’s writing a number of sentences,” Kanter mentioned.

The MLPerf Coaching benchmark for LLM makes use of the GPT-J 6B (billion) parameter mannequin to carry out textual content summarization on the CNN/Every day Mail dataset. Kanter emphasised that whereas the MLPerf coaching benchmark focuses on very giant basis fashions, the precise job MLPerf is performing with the inference benchmark is consultant of a wider set of use instances that extra organizations can deploy.

“Many people merely don’t have the compute or the information to assist a extremely giant mannequin,” mentioned Kanter. “The precise job we’re performing with our inference benchmark is textual content summarization.”

Inference isn’t nearly GPUs — at the very least in line with Intel

Whereas high-end GPU accelerators are sometimes on the high of the MLPerf itemizing for coaching and inference, the large numbers aren’t what all organizations are on the lookout for — at the very least in line with Intel.

Intel silicon is properly represented on the MLPerf Inference 3.1 with outcomes submitted for Habana Gaudi accelerators, 4th Gen Intel Xeon Scalable processors and Intel Xeon CPU Max Sequence processors. Based on Intel, the 4th Gen Intel Xeon Scalable carried out properly on the GPT-J information summarization job, summarizing one paragraph per second in real-time server mode.

In response to a query from VentureBeat throughout the Q&A portion of the MLCommons press briefing, Intel’s senior director of AI merchandise Jordan Plawner commented that there’s range in what organizations want for inference.

“On the finish of the day, enterprises, companies and organizations must deploy AI in manufacturing and that clearly must be carried out in every kind of compute,” mentioned Plawner. “To have so many representatives of each software program and {hardware} exhibiting that it [inference] might be run in every kind of compute can be a main indicator of the place the market goes subsequent, which is now scaling out AI fashions, not simply constructing them.”

Nvidia claims Grace Hopper MLPef Inference positive aspects, with extra to come back

Courtesy Nvidia

Whereas Intel is eager to point out how CPUs are priceless for inference, GPUs from Nvidia are properly represented within the MLPerf Inference 3.1 benchmarks.

The MLPerf Inference 3.1 benchmarks are the primary time Nvidia’s GH200 Grace Hopper Superchip was included. The Grace Hopper superchip pairs an Nvidia CPU, together with a GPU to optimize AI workloads.

“Grace Hopper made a really robust first exhibiting delivering as much as 17% extra efficiency versus our H100 GPU submissions, which we’re already delivering throughout the board management,” Dave Salvator, director of AI at Nvidia, mentioned throughout a press briefing.

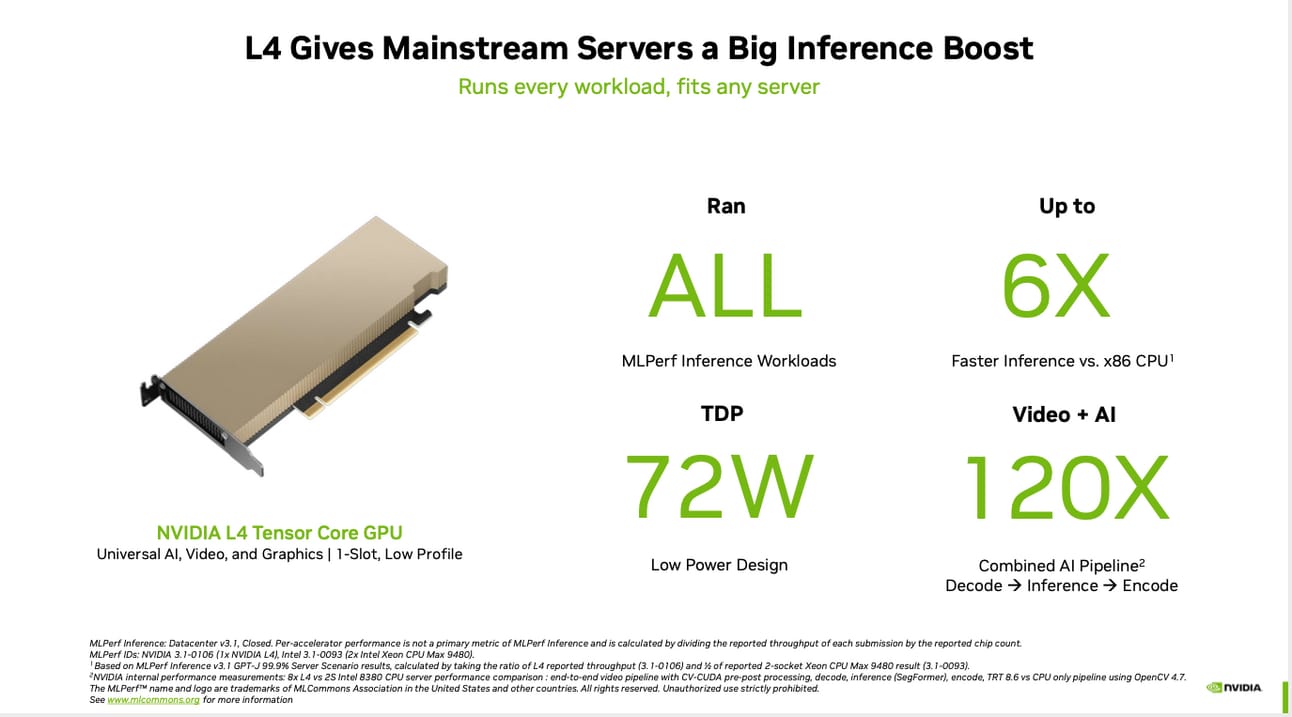

The Grace Hopper is meant for the biggest and most demanding workloads, however that’s not all that Nvidia goes after. The Nvidia L4 GPUs had been additionally highlighted by Salvator for his or her MLPerf Inference 3.1 outcomes.

“L4 additionally had a really robust exhibiting as much as 6x extra efficiency versus the very best x86 CPUs submitted this spherical,” he mentioned.

VentureBeat’s mission is to be a digital city sq. for technical decision-makers to achieve information about transformative enterprise expertise and transact. Discover our Briefings.

The post MLPerf 3.1 provides giant language mannequin benchmarks for inference appeared first on AIPressRoom.